DLBIRHOUI

Deep Learning Based Image Reconstruction for Hybrid Optoacoustic and Ultrasound Imaging

Abstract

Over the last decade, Razansky lab was instrumental in the development of multi-spectral optoacoustic tomography (MSOT), transforming this novel bio-imaging technology from the initial demonstration of technical feasibility, through establishment of image reconstruction methodologies all the way toward its clinical translation. The method rapidly finds its place as a potent clinical imaging tool due to its high sensitivity and molecular specificity as well as non-invasive, real-time and high-resolution volumetric imaging capabilities deep in living biological tissues. Despite great promise demonstrated in the pilot clinical studies, human imaging with MSOT is afflicted by a limited tomographic access to the region of interest while significant constraints are further imposed on the light deposition in deep tissues. This project aims at development of new artificial intelligence capabilities for improving image quality and diagnostic capacity of MSOT images acquired by sub-optimal scanner configurations resulting from e.g. application-related constraints or low cost design considerations. In particular, we will devise machine learning approaches to enable efficient and robust multimodal combination of MSOT with pulse-echo ultrasonography by training neural networks on high-resolution and -quality training datasets generated by dedicated optimally designed scanner configurations. The trained models will be used to restore quality of artifactual images produced by various sub-optimal scanner configurations with limited tomographic view or sparsely acquired data in typical clinical imaging scenarios. Those advancements will help reducing inter-clinician variability and enable a more efficient, rapid, and objective analysis of large amounts of image data, thus relaxing requirements for specialized training and facilitating the wider adoption of MSOT apparatus in primary care and other non-hospital settings.

People

Collaborators

Anna joined the SDSC as a Senior Data Scientist in 2020. She is a statistician by training with a Master’s degree with Honors in Mathematical Statistics from Lomonosov Moscow State University. Anna has graduated with a PhD from ETH Zurich in 2018, where she worked on causal structure learning for protein signaling pathways. During her studies she did an internship at Facebook AI Research in New York working on discovery of hierarchies from data using hyperbolic geometry. Later she joined Facebook AI Research in Paris as a postdoctoral researcher, where she worked on a problem of out-of-distribution prediction of unseen drug combinations. Broadly her research interests are in unsupervised and self-supervised learning, domain adaptation and generalisation.

Firat completed his undergraduate studies in Electronics Engineering at Sabanci University. He later received his MSc. in Electrical and Electronics Engineering from EPFL. He conducted his doctoral studies on medical image segmentation in Computer Vision Lab at ETH Zurich. In between, he visited INRIA (Sophia Antipolis, France) and ABB Corporate Research Center (Baden, Switzerland). His research interests revolve around computer vision and machine learning, with a focus on the medical domain. He has been with SDSC since 2019.

PI | Partners:

description

Goals:

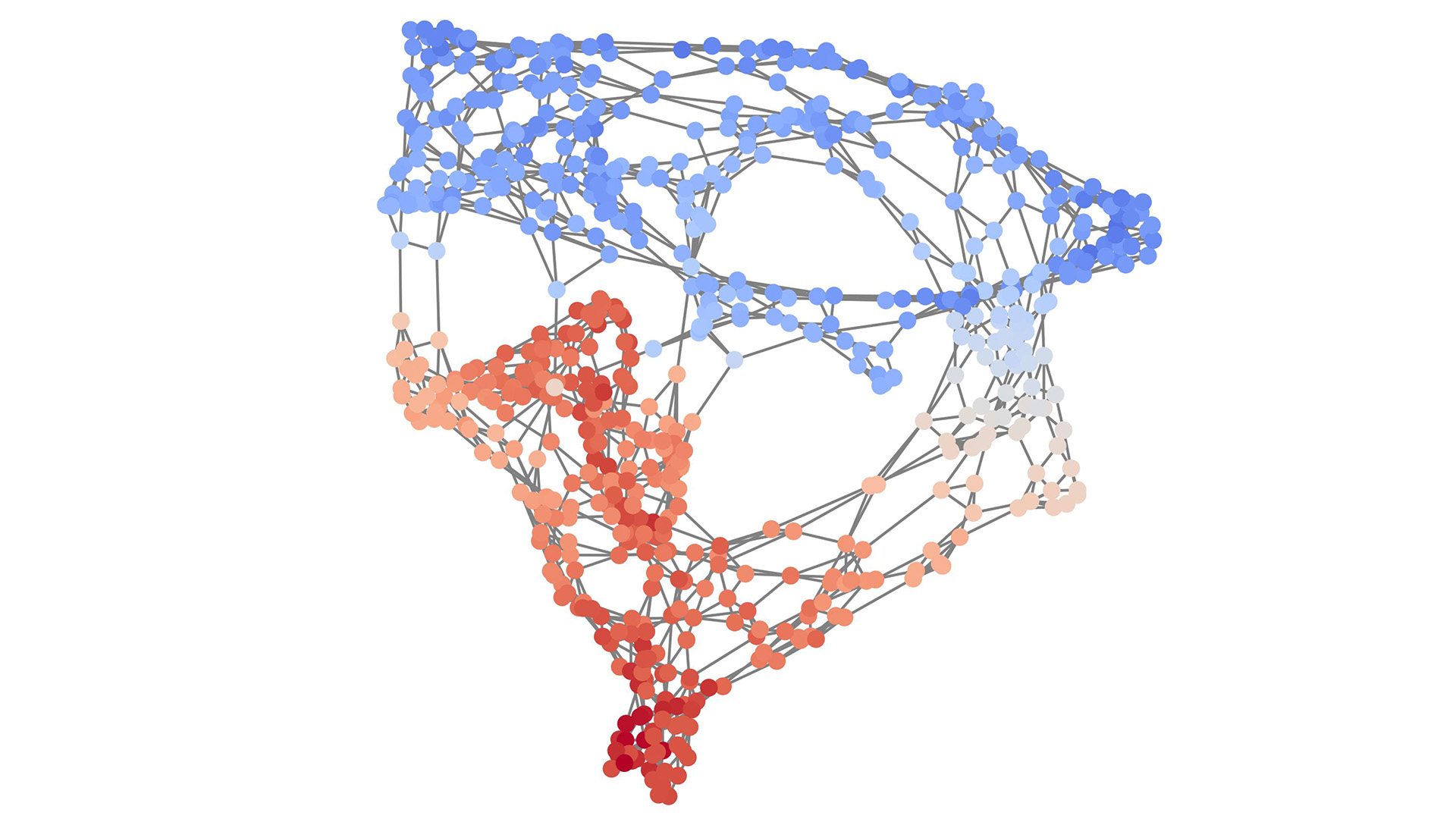

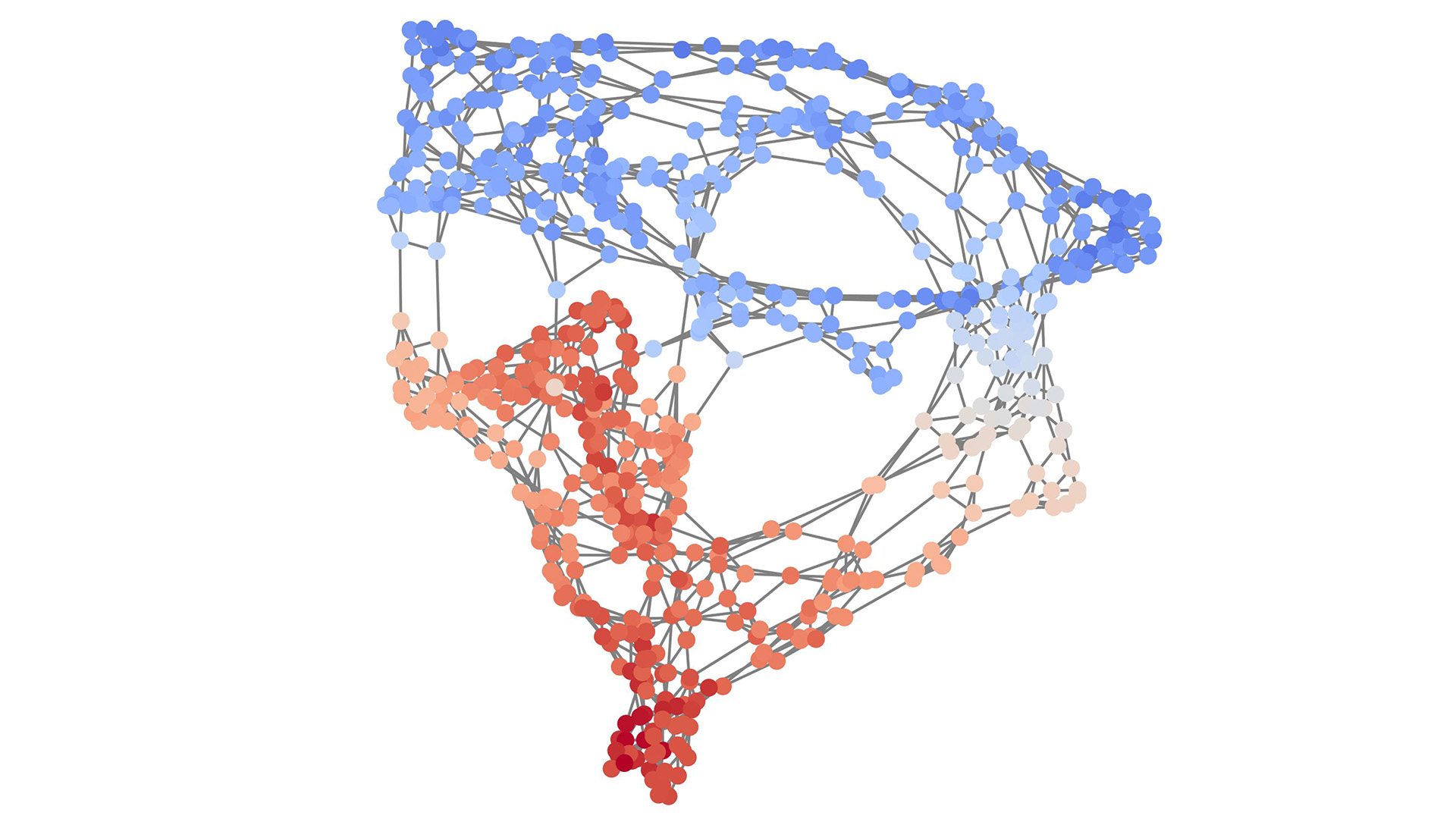

- Devising deep learning approaches to enable accurate reconstruction of 2D and 3D multi-spectral optoacoustic tomography (MSOT) images from artifactual data recorded by sub-optimal imaging systems.

- Development of accurate automatic segmentation and image improvement approaches for multimodal hybrid optoacoustic ultrasound (OPUS) images.

- Correcting for the common MSOT image artefacts present in the images acquired under typical handheld clinical imaging scenarios.

Approach:

- Explore data science approaches for both acquired signal domain and reconstructed image domain paired and unpaired data for reconstruction of accurate scene using limited view input.

- Explore data science approaches for segmentation of structures of interest (e.g., blood vessels) relying on weak annotations or different image domains (e.g., simulated data).

Impact:

- MSOT is a considerably new imaging modality among medical imaging approaches. It has many desired properties, such as real-time acquisition and high resolution. Image contrast is achieved through differences of tissue wavelength absorption properties, allowing yet a new insight into non-invasive tissue imaging close to surface with no known side affects on the imaged body (e.g., no ionizing radiation). An initial potential sought for MSOT is detection of cancerous tissue based on oxygen consumption of cystic bodies. Another field of application is assessment of lipid residue within vessels (e.g., carotid artery).

Presentation

Gallery

Annexe

Additionnal resources

Bibliography

Publications

Related Pages

More projects

CLIMIS4AVAL

News

Latest news

The Promise of AI in Pharmaceutical Manufacturing

The Promise of AI in Pharmaceutical Manufacturing

Efficient and scalable graph generation through iterative local expansion

Efficient and scalable graph generation through iterative local expansion

RAvaFcast | Automating regional avalanche danger prediction in Switzerland

RAvaFcast | Automating regional avalanche danger prediction in Switzerland

Contact us

Let’s talk Data Science

Do you need our services or expertise?

Contact us for your next Data Science project!